What is it?

Researchers have uncovered three serious vulnerabilities in LangChain and LangGraph—two widely used open-source frameworks powering modern AI applications.

These tools are foundational for building Large Language Model (LLM) apps, meaning they often sit right in the middle of your data, workflows, and integrations.

The issue?

These flaws create multiple paths for attackers to extract sensitive data, including:

- Files stored on your system

- API keys and environment secrets

- Internal AI conversation history

⚠️ Why should you care?

This isn’t just another vulnerability—it’s a direct line into your AI stack.

With tens of millions of downloads per week, these frameworks are deeply embedded across enterprise environments. If exploited, attackers could:

- Access sensitive files (including configs like Docker)

- Steal API keys and credentials

- Extract confidential prompts and AI-generated outputs

- Run malicious database queries

Even more concerning:

AI frameworks like LangChain don’t operate in isolation—they’re part of a massive dependency chain. One flaw can ripple across:

- Integrated tools

- Custom AI apps

- Third-party extensions

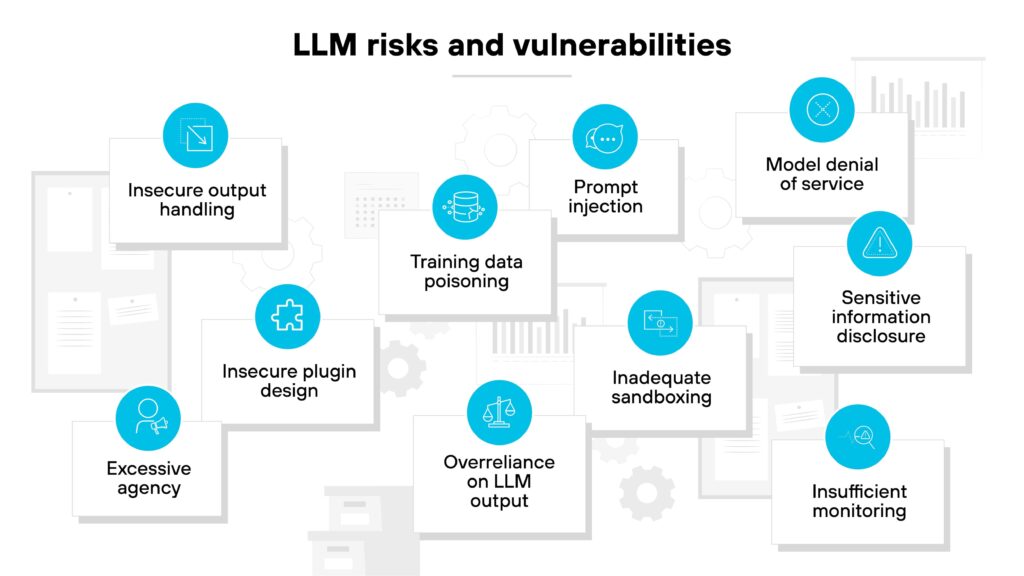

👉 We’re now seeing a pattern: AI tools are inheriting classic vulnerabilities (SQL injection, deserialization, path traversal)—but with much bigger consequences.

🔍 The Vulnerabilities (Quick Breakdown)

- CVE-2026-34070 (High)

Path traversal → unauthorized file access - CVE-2025-68664 (Critical)

Unsafe deserialization → leaks API keys & secrets - CVE-2025-67644 (High)

SQL injection → database manipulation & data exposure

💡 Real-world impact: attackers can chain these together to fully drain sensitive enterprise data.

🚨 This is happening fast

A related flaw in Langflow was exploited within 20 hours of disclosure.

That’s the reality now:

Attackers are moving at AI speed.

✅ What can you do?

1. Patch immediately

- Update:

- LangChain-Core ≥ 1.2.22

- LangGraph SQLite ≥ 3.0.1

2. Lock down your AI environment

- Restrict filesystem access

- Isolate API keys and secrets

- Use least-privilege access controls

3. Validate and sanitize inputs

- Treat all prompt inputs as untrusted

- Block unsafe deserialization paths

4. Monitor AI pipelines

- Log prompt activity

- Watch for abnormal data access or query behavior

5. Review your AI stack dependencies

- Identify where LangChain is embedded

- Check downstream tools and integrations

💬 Britec Insight

AI is accelerating business—but it’s also accelerating risk.

These vulnerabilities highlight a shift:

Your AI tools are now part of your attack surface.

If they’re connected to your data, they need to be secured like any other critical system.

🚀 What’s next?

If you’re building or using AI-powered tools, now’s the time to:

- Reassess your security posture

- Understand where AI touches your data

- Close the gaps before attackers find them

Britec helps businesses secure what’s next—without overcomplicating it.

From AI risk assessments to full-stack protection, we make sure innovation doesn’t come at the cost of security.